Overview

Git is an open-source distributed version control system. Git allows multiple users to work together on the same code base, it enables to maintain multiple different versions through branching and it allows to keep track of changes made locally and from remote repositories.

Git, Github, and Gitlab

Git is a version control system that lets you manage and keep track of your source code history, it’s an open-source technology and is available as a command-line interface (CLI) for saving and merging changes in the source code.

GitHub and Gitlab are cloud-based services, built on top of the open-source technology git, they let you manage Git repositories and they offer tools to overcome problems related to conflict resolution and keeping track of changes using a single central truth. GitHub, Gitlab, and other services provide tools and UI interfaces designed to help users better manage their repositories. Some of the additional features offered by these services are:

- User management

- UI to open and merge pull requests

- Automation and CI/CD services

- Webhooks

- Notifications

Git for data-science teams

Git is less commonly used in data-science, people are often working in small groups and they tend to just keep their code on a local folder, in a google Drive, or in Dropbox and they try to make sure that collaborators never work on the same files at the same time to avoid creating conflicts or breaking changes.

But there are a lot of useful features that git and services like Github offer to streamline the modern data-science teams, even when they only work in small teams and don’t want to contribute to larger projects:

- Central source of truth: When using git, there is usually a single central repository (called “origin” or “remote”) which the individual users will clone to their local machine (called “local” or “clone”).

- Keep track of changes: Git works by saving code changes as a series of discrete “commits”.

- Going back in history: By making commits, it becomes possible to easily go back to a previous version of the project at any time.

- Collaboration and simultaneous work: Git is very good at resolving conflicts, which allows multiple users to work on the same file at the same time. Every time a user saves changes, they can send those changes to the central repository (“origin”), this operation is called “push”, and after “review” a “merge” can be performed to the main branch.

- Diffs: Git treats each line in a text document separately, this allows two users to work on the same Python file or the same Latex file, the system allows to easily see changes made by a different user.

Learning Git

Git is a tool with a vast terminology and jargon with a steep learning curve, there are lots of tutorials online for learning git, which can often be difficult for new users, these guides provide a quick summary for the main terms and commands that you will often hear or use as a contributor. Also, Github has a nice quick start guide with a step by step guide to go over the basics of a git project using Github’s additional tools.

File states

Since git operates at the file level, each file in git is in one of the following states:

- modified: file is modified but not committed

- deleted: file is deleted but not committed

- staged: file is modified, marked to be part of your next commit

- committed: file is safely stored in your local database

Handling datasets

One of the important aspects to know about git is its lack of handling of binaries and large datasets. This means that it is generally advised not to push datasets to the git repo.

Given that Git is a version control system designed around text files, it provides the right abstraction to handle source code and other related content like configuration, requirements, dockerfiles, Json files, dependencies, and docs content. You may store small csv files, but generally speaking, git is not meant for storing dataset, so you should not checkout datasets and folders containing images or other medias.

In a machine learning project there are several dimensions that are required to be tracked and versioned:

- Code

- Configuration

- Metadata and metrics

- Artifacts and outputs

- Data

Git has some extensions like LFS that refer to external datasets from a git repository. While they serve a purpose and solve some of the technical limits, like size and speed, they do not solve the core problem encountered by data-science and machine learning teams, and this is where a tool like Polyaxon shines.

Ignoring patterns

Since we established that users should not track their data in a git,

it is important to learn how you can configure your git repositories to avoid accidentally staging and committing data or large folders containing training datasets.

Git provides a config file called .gitignore that allows users to specify paths and patterns, e.g.

# ignore files patterns

*.png

*.jpeg

*.wav

*.rar

# ignore dataset folders

imaging-datasets/Avoid committing secrets

In machine learning projects, users will be dealing with datasets stored on S3 or GCS, or will need to load data from a datawarehouse or a database, it’s very important to remember that you should never commit passwords, tokens, key codes, certificates, or any other sensitive data into git, even when the repository is private.

Given that each repository, even private ones, can be cloned by users to local laptops or to other machines, you should never store your secrets as part the repository to avoid any security problems, your secrets should be exposed as environment variables or requested from a vault system.

Force pushing

The commit command has a --force flag, although it serves a purpose, most data scientists who are not very experienced with git should never use this flag.

Using push force without a good understanding of how git works might accidentally override the history and delete important work made by other users, or even the same user.

Commit messages

A commit message is a descriptive text that is added to the commit object by the user who made the commit. It has a title line, and an optional body. Commit messages are used in many ways including:

- To help a future reader quickly understand the changes and why they were made.

- To assist with easily undoing specific changes.

- To prepare release notes or bump versions for a release.

It’s recommended to follow some guidelines when committing changes:

- Keep it short: If you need to add a larger description use a body to further explain the changes.

- Make semantic changes: a commit should contain changes that fix one bug or make a single enhancement.

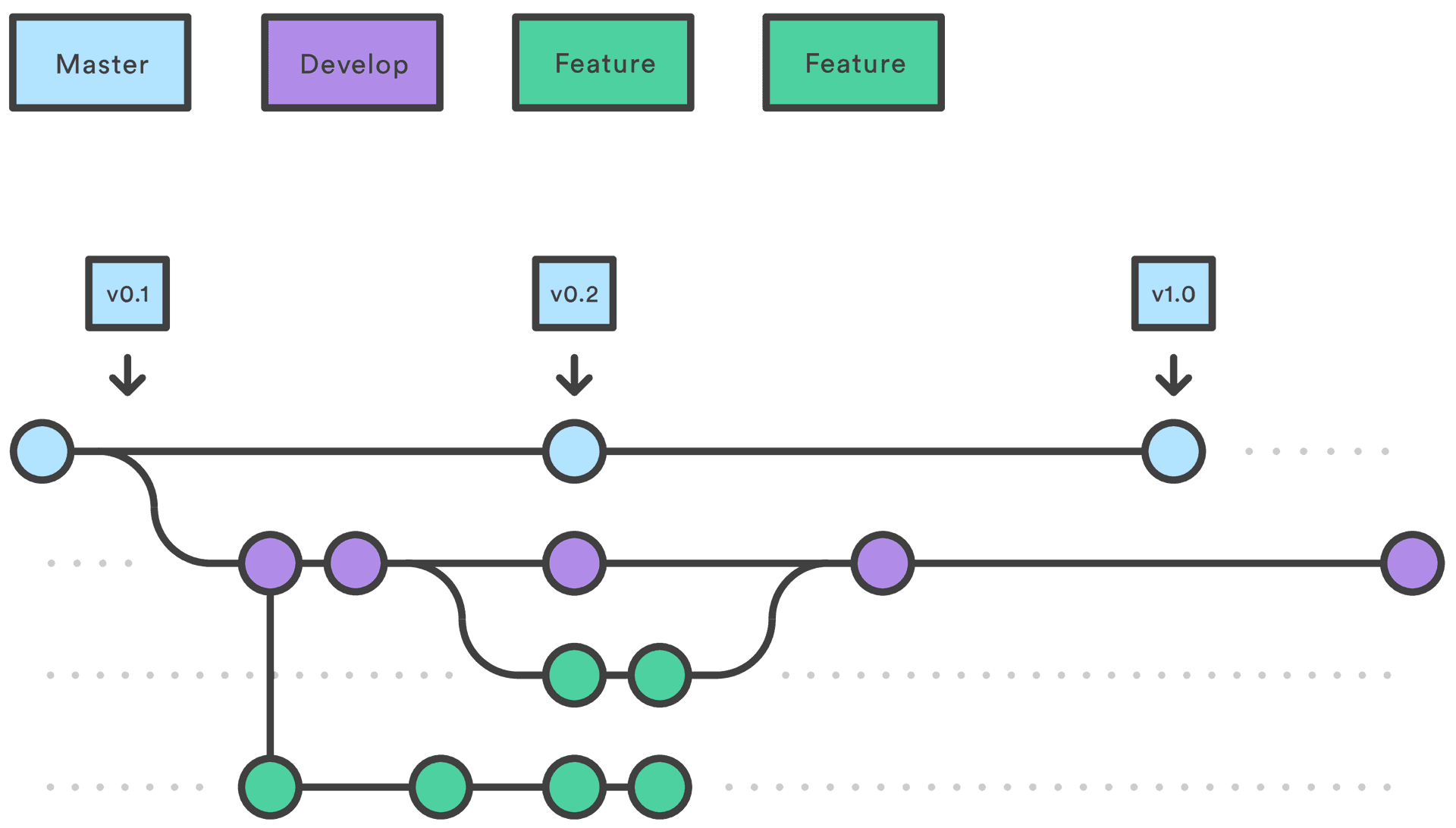

Using branches

Instead of committing all changes to the same branch, oftentimes the master or the main branch, users should create as many branches as required to create a single enhancement or a single fix. This allows to work in parallel on multiple features or bug fixes without mixing the changes, it also allows to switch to a different branch while waiting for a blocking upstream change.

Pull requests are slightly more advanced feature, but if your are working in a mature data science team on production projects with several collaborators, pull requests will help you propose your changes to other users to:

- Get feedback and review

- Spread knowledge

- Get help

Contribution process

You will often hear the term “fork”, it’s basically the process of making a copy, making changes, and then proposing the changes as an enhancement or a bug fix. The process usually goes as follow:

- Fork the repository

- Make the fix

- Commit the changes

- Open a pull request

- Get review and commit additional changes

- Approve the pull request

- Merge the changes